How to vet automation platforms before you burn two quarters

Most platform decisions are made in presentation mode. The failure comes later, when the operating model cannot absorb ownership, exception handling, and rollback discipline.

A few months ago a marketing team showed me a platform shortlist they were ready to buy. The demos were polished. The sales narrative was clean. Internally, the mood was optimistic because the tool looked like it would remove a lot of manual work. Six weeks later, the conversation had flipped. Integration assumptions were wrong. Ownership was unclear. Exceptions were multiplying. The team was now spending meetings debating edge cases instead of shipping outcomes.

That story is common because most platform decisions are still made in presentation mode, not operating mode. Teams compare visible features and overlook invisible failure paths: who owns workflow changes, what happens when inputs are dirty, how permissions and audit trails work, and how quickly the organisation can unwind the decision if it turns out to be the wrong fit. By the time those failure paths show up, they are expensive to unwind both technically and politically.

The non-obvious risk is not a feature gap. It is operating misfit. When leaders say a platform “did not work,” what they often mean is that the organisation could not absorb its operating demands. The vendor may have delivered exactly what was promised. The internal system just lacked decision rights, review cadence, and rollback discipline. So the right question is not “can this platform do it?” The right question is “can we run this platform reliably under our real constraints?”

What most teams misread about platform selection

Most evaluation processes overweight what is easiest to observe. Demos, UI screenshots, and feature matrices create the illusion of certainty. They tell you what a product can do in a controlled environment. They do not tell you what your team will do when the system is live and every automation has consequences: a lead routed to the wrong owner, a finance number duplicated, a customer emailed twice, or a workflow that silently stops because one upstream field changed.

In real companies, reliability is not a product attribute. It is a relationship between the product and the operating model around it. The same tool can look “best in class” in a demo and still be the wrong decision if it requires an ownership structure you do not have, or if the integration reality creates a maintenance burden your team cannot carry. This is why platform selection is an operating decision first and a software decision second.

If you want a simple acid test, look at what your team debates during the selection process. If most of the time is spent debating features, you are probably still in presentation mode. If most of the time is spent debating governance, permissions, failure handling, and reversibility, you are closer to operating mode. That shift is not bureaucracy. It is what protects you from spending two quarters implementing something you cannot sustain.

The decision model

You do not need a 40-page procurement process to avoid a bad choice. You need a decision model that forces the right questions early. I use three filters that can be applied in a single session: strategic fit, execution risk, and reversibility. They sound simple because they are simple. The quality comes from how honestly you answer them.

Filter 1: strategic fit

Strategic fit is not “does this have the features we want.” Strategic fit is “does this remove a currently binding constraint.” That constraint should be describable in one sentence with an expected commercial effect inside 90 days. If the constraint is vague, every demo will look good because you are shopping for possibilities rather than solving a defined problem.

Write a one-page decision brief before any serious vendor conversation. Name the constraint. Name the outcome you expect to move (time-to-lead, handoff quality, reporting confidence, cost per qualified pipeline). Name what failure would look like. If you cannot write that brief, stop. The work is not “choose a platform.” The work is “decide what we are trying to change.”

Filter 2: execution risk

Execution risk is the question teams avoid because it is uncomfortable: what fails first in our environment. Do not ask for the ideal architecture. Ask about your actual stack. Where do data transformations happen? How are permissions handled? What breaks when one upstream field changes? How are errors surfaced? Who gets alerted? What does the audit trail look like when something goes wrong?

A strong platform can still be a high-risk choice if it assumes clean data, perfect discipline, and a centralised owner. Most teams have the opposite: messy inputs, changing requirements, and distributed ownership. Risk is not “will the vendor deliver.” Risk is “will the organisation keep the system correct as reality changes.” If your team cannot answer who owns workflow changes, who approves exceptions, and who is accountable for rollback, you are not ready to buy. You are ready to create a new source of operational debt.

Filter 3: reversibility

Reversibility is the filter that saves you when you are wrong. In most companies, the real cost of a platform mistake is not the subscription. It is the switching cost: new workflows built, teams retrained, reporting structures rewritten, and political capital spent defending the decision. When reversibility is low, teams rationalise the mistake instead of correcting it, and the system ossifies.

Ask explicitly: if we are wrong, how quickly can we unwind this decision without breaking critical processes. What data is locked in? What integrations become brittle? Can we run a parallel system for a month while we exit? Can we export everything we need in a usable shape? In practice, the platform that is slightly weaker on features but higher on reversibility is often the better decision, because it keeps the organisation learning.

Governance pre-mortem

After you apply the three filters, run a governance pre-mortem. Assume the rollout failed six months from now. List the likely causes. Most teams surface the same themes: nobody owns workflow changes, audit trails are weak, exception handling is unclear, and there is no agreed rollback path. The purpose is not to create fear. The purpose is to surface the invisible operating requirements before contract signature.

Then translate the pre-mortem into three concrete controls. First, define decision rights: who can change workflows, who approves exceptions, and who is accountable for outcomes. Second, define a review cadence: a two-week operating review for the first eight weeks is often enough to catch drift early. Third, define rollback discipline: what would make you pause expansion, what would make you roll back, and what data you need to preserve to do it cleanly.

If your organisation is deploying automation that touches customers, revenue, or reporting, treat governance as a safety mechanism, not a compliance ritual. A good system makes it easy to ship changes and hard to ship the wrong changes. That is why operating design matters as much as tooling. If you need help defining the operating model first, start with Operational Marketing Design rather than trying to buy your way out of coordination problems.

Counterargument: too much diligence can slow strategic momentum

A fair counterargument is that extensive vetting slows momentum and causes teams to miss strategic windows. That is true in one sense. Over-analysis can create decision paralysis, especially when stakeholders use “more diligence” as cover for avoiding commitment. The trade-off is that under-analysis creates operational debt that compounds quietly. It feels faster in the first month and then drags every week after.

The goal is disciplined speed. Short evaluation cycles with explicit decision criteria. A timeboxed test that pressures the weak points. A decision brief that prevents scope drift. If your process is either endless diligence or blind urgency, the problem is not your platform shortlist. The problem is your decision discipline.

30/60/90 rollout and review cadence

Once you select a platform, the work starts. The selection decision is only useful if it creates a system your team can run.

In the first 30 days, choose one workflow that matters and instrument it. Make the workflow explicit: inputs, transformations, owners, and failure handling. Establish the minimum audit trail you need. Define the metrics you will use to judge whether the workflow is improving outcomes or just creating noise. If you do not have measurement confidence, the rollout will become politics. If you need that foundation, start with Measurement and Attribution Consulting.

By 60 days, expand only if the operating model is stable. Stable does not mean perfect. It means exception handling is predictable, ownership is clear, and the system is not silently degrading. This is where teams often fail: they scale because the platform “works” in the happy path, while the edges are still chaotic. A two-week review cadence helps. If decision latency increases and output quality declines, pause. The pause is not failure. It is how you prevent drift from becoming a permanent cost.

By 90 days, you should have enough evidence to answer the only question that matters: did this remove the constraint you named in the decision brief. If yes, scale deliberately and document the operating pattern. If no, do not keep expanding out of pride. Use the reversibility you protected earlier and unwind. A smaller team that can make and correct decisions quickly will outperform a larger team trapped in sunk-cost thinking.

Next step

If you are evaluating an automation platform right now, send me your shortlist and your current operating constraint. I will give you the first three operating-risk questions to run in your next vendor meeting and the one governance control you should put in place before you sign anything.

Evidence and references

McKinsey has repeatedly shown that transformation outcomes are shaped by operating model discipline, not only by tool choice. See Why do most transformations fail? and How to get your operating model transformation back on track for the recurring patterns that show up when governance, ownership, and cadence are weak.

If your automation program includes AI-enabled decisioning, the governance problem gets sharper. The NIST AI Risk Management Framework (AI RMF 1.0) is a useful reference for building explicit risk controls, even if your implementation is not “AI-heavy” yet.

Related reading on this site: The Marketing Leadership Role Is Being Rewritten in Real Time (decision quality and cadence), and CRO and Funnel Diagnostics (how to treat operational failure modes as measurable leaks rather than opinions).

More on Digital Channel Strategy

The hiring signals boards use to judge marketing leadership

Boards usually assume marketing leadership hires fail because they picked the wrong person. More often, the failure starts earlier: the business has not defined the real growth constraint, the operating conditions for success, or the size of commitment it can justify before it learns.

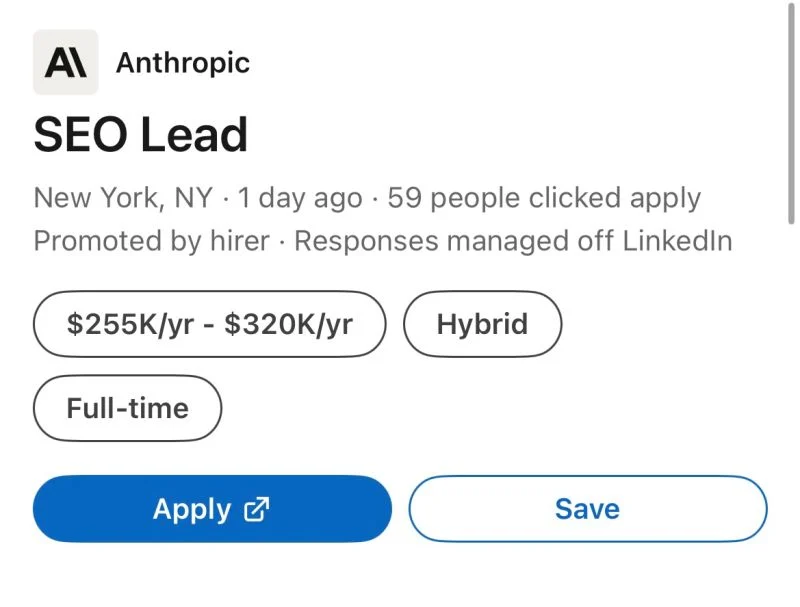

Why AI companies are hiring for the jobs they’re supposed to replace

AI makes execution cheaper, not judgment. This essay explains why the companies building the tools still hire for SEO, CRO, and web strategy, and how to decide which parts of marketing still need human ownership.

The marketing leadership role is being rewritten in real time

The leadership mandate is shifting from campaign output to decision quality under ambiguity. The leaders who gain influence are the ones who translate marketing into fundable trade-offs and build learning cadence.